Establishing the Validity and Reliability of a Program Evaluation Questionnaire using Rasch Measurement Model

Abstract

This study was conducted to establish the validity and reliability of the evaluation questionnaire for the 12th Regional Congress of the Search For SEAMEO Young Scientist (SSYS) 2022 using the Rasch Measurement Model that was aided by the Winsteps software. The questionnaire contains 24 items that evaluate the Congress's objectives, inputs, as well as event management and administration. Each item is rated on a 4-point rating scale. The instrument was administered at the end of the 3-days SSYS Congress held virtually in which 1891 participants submitted their responses. The establishment of validity and reliability of this questionnaire is crucial before further analysis is carried out. The Rasch Model analysis showed that the reliability index of the respondents was 0.87 and person separation is 2.60, while the item reliability index is 0.96 with an item separation index of 5.08. Item polarity indicates that the point measure correlation (PTMEA CORR) for the 24 items is between 0.67 to 0.76. In terms of item fit, the results indicated one misfit item that needs improvement in the future. The Principal Component Analysis (PCA) shows that almost all the items are unidimensional and intended to measure a similar trait. All these indicate the reliability of the questionnaire, and researchers can proceed with further data analysis to evaluate the 12th Regional Congress of the SSYS 2022.There is no Figure or data content available for this article

References

Aziz, A. A., Masodi, M. S., & Zaharim, A. (2013). Asas Model Pengukuran Rasch: Pembentukan Skala dan Struktur Pengukuran. Universiti Kebangsaan Malaysia.

Azrilah, A. . (2010). Rasch measurement fundamentals: Scale Construct and Measurement Structure. Kuala Lumpur, Malaysia: Integrated Publishing.

Bond, T. G., & Fox, C. M. (2007). Applying the Rasch Model: Fundamental Measurement in the Human Sciences. New Jersey, USA: Lawrence Erlbaum Association, Inc.

Bond, T. G., & Fox, C. M. (2013). Applying the Rasch Model: Fundamental Measurement in the Human Sciences. Psychology Press.

Chou, Y. T., & Wang, W. C. (2010). Checking dimensionality in item response models with principal component analysis on standardized residuals. Educational and Psychological Measurement, 70(5), 717–731. https://doi.org/10.1177/0013164410379322

Finlayson, M. L., Peterson, E. W., Fujimoto, K. A., & Plow, M. A. (2009). Raschvalidation of the falls prevention strategies survey. Journal Archives of Physical Medicine and Rehabilitation, 90(2), 2039–2046.

Fisher, W. P. (2007). Rating scale instrument quality criteria. Rasch Measurement Transactions, 21(1), 1095.

Ishak, A. H., Osman, M. R., Mahaiyadin, M. H., Tumiran, M. A., & Anas, N. (2018). Examining unidimensionality of psychometric properties via rasch model. International Journal of Civil Engineering and Technology, 9(9), 1462–1467.

Linacre, J. M. (2007a). A user’s guide to WINSTEPS Rasch-model computer programs. Chicago, IL: Mesa Press.

Linacre, J. M. (2007b). Dimensionality and Structural Validity investigation - an example. Retrieved from https://www.winsteps.com/winman/index.htm?multidimensionality.htm

Linacre, J. M. (2012). Winsteps Rasch measurement computer program User’s guide. Oregon, USA: Beaverton.

Linacre, J. M., & Wright, B. D. (2012). A user’s guide to WINSTEPS ministeps Rasch model computer programs. Chicago, USA: Mesa Press.

Mangao, D.D. & Ng, K.T. (2014). Search for SEAMEO Young Scientists (SSYS) - RECSAM's initiative for promoting public science education: The way forward. Presentation published in International Conference on Science Education 2012 (refereed) Proceedings (pp.45-56). Springer, Berlin, Heidelberg. Retrieved: https://scholar.google.com/citations?view_op=view_citation&hl=en&user=qewEkbgAAAAJ&cstart=20&pagesize=80&citation_for_view=qewEkbgAAAAJ:OU6Ihb5iCvQC

Ng, K.T. (2005). An evaluation of the scientific creativity and problem-solving behaviours of young learners in the development of investigative project work. Presentation published in the Proceedings (refereed) of International Conference on Science and Mathematics Education (CoSMEd) 2005 (pp.118-129). Penang, Malaysia: SEAMEO RECSAM. Retrieved https://scholar.google.com/citations?view_op=view_citation&hl=en&user=qewEkbgAAAAJ&citation_for_view=qewEkbgAAAAJ:L8Ckcad2t8MC

Ng, K.T., Baharum, B., Othman, M., Tahir, S. & Pang, Y.J. (2020). Managing technology-enhanced innovation programs: Framework, exemplars and future directions. Solid State Technology. Vol. 63, Issue 1s, pp.555-565. Horizon Research Publishing Corporation. Retrieved http://www.solidstatetechnology.us/index.php/JSST/article/view/741

Nunnally, J. C., & Bernstein, I. H. (1994). Psychometric theory. New York, NY, USA: McGraw-Hill.

Rachman, T., & Napitupulu, D. B. (2017). Rasch Model for Validation a User Acceptance Instrument for Evaluating E-learning System. CommIT (Communication and Information Technology) Journal, 11(1), 9. https://doi.org/10.21512/commit.v11i1.2042

RECSAM (2022). Programme Book of the 12th Regional Congress of Search for SEAMEO Young Scientists. Penang, Malaysia: SEAMEO RECSAM

Sick, J. (2010). Unidimensionality Equal item discrimination and error due to guessing. JALT Testing & Evaluation SIG Newsletter., 14(2), 23–29.

Stufflebeam, D. L. (2000). The CIPP Model for Evaluation. In T. Stufflebeam, D. L., Madam, C.F. & Kellaghan (Ed.), Evaluation Models (pp. 279–317). Boston: Kluwer Academit.

Talib, R., Iahad, N. A., Ashari, Z. M., Rameli, M. R. M., Bakar, Z. A., & Dollah, R. (2019). Rasch strategies for evaluating quality of the Conceptions and Alternative assessment Survey (CETAS). Universal Journal of Educational Research, 7(12 A), 10–17. https://doi.org/10.13189/ujer.2019.071902

Tavakol, M., & Dennick, R. (2011). Making sense of Cronbach’s alpha. International Journal of Medical Education, 2, 53–55. https://doi.org/10.5116/ijme.4dfb.8dfd

Wright, B.D., & Stone, M. H. (1979). Best Test Design. Chicago, IL: Mesa Press.

Wright, Benjamin D., & Masters, G. N. (1982). Rating Scale Analysis: Rasch Measurement. In Journal of the American Statistical Association (Vol. 78). https://doi.org/10.2307/2288670

How to Cite This

Copyright and Permissions

Authors who publish with this journal agree to the following terms:

Authors retain copyright and grant the journal right of first publication with the work simultaneously licensed under a Creative Commons Attribution License that allows others to share the work with an acknowledgement of the work's authorship and initial publication in this journal.

Authors are able to enter into separate, additional contractual arrangements for the non-exclusive distribution of the journal's published version of the work (e.g., post it to an institutional repository or publish it in a book), with an acknowledgement of its initial publication in this journal.

Authors are permitted and encouraged to post their work online (e.g., in institutional repositories or on their website) prior to and during the submission process, as it can lead to productive exchanges, as well as earlier and greater citation of published work (See The Effect of Open Access).

Dinamika Jurnal Ilmiah Pendidikan Dasar is licensed under a Creative Commons Attribution 4.0 International License.

Data Availability

Share this

sidebar

Browse

Keywords

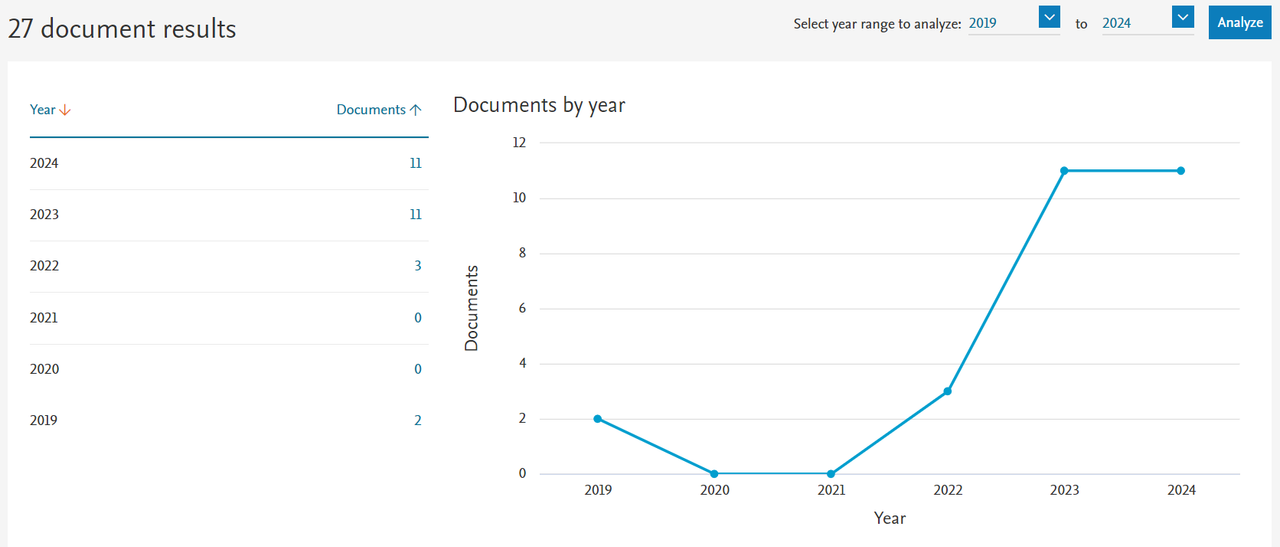

citedness

statcounter

Visitors